Crawling your website

While doing a website audit, our crawler makes multiple requests to your website. We'll open your sitemap, crawl pages for more links, and run an SEO check on every page.

Although we try to keep the number of requests as low as possible, we inevitably need to open a lot of pages on your site. For large websites, this can result in thousands of requests. To make sure this doesn't affect your website, we're allowing enough time between those requests. Whenever it seems your server is too busy, we'll slow down the number of requests.

Sometimes though, a server, CDN (Content Delivery Network) or Firewall may block our requests. This can cause issues like:

- Not seeing any pages in the report

- Seeing a lot of error pages

- Seeing a lot of broken links

I'm not seeing any pages

If you're not seeing any pages in your site report, it most likely means our crawler could not access your website at all. As a result, we can't open sitemaps or follow links. Therefore, you won't see any pages in the report. Please check with your technical team or hosting provider if they're blocking crawlers from accessing the site. If so, ask them to whitelist our user agent.

I'm seeing a lot of error pages

If some pages work, but others don't, it could be that we're crawling your site to quickly. This is exceptional, because our server will normally slow down the requests as soon as your website starts giving us errors. Normally, a new crawl will fix this. If not, please contact us.

I'm seeing a lot of broken links

Our link checker checks all internal and external links on your site. If you're seeing a lot of internal broken links, please run a new crawl. Just like our crawler, the link checker will slow down the number of requests. Normally this leads to better results.

Solution: whitelisting SiteGuru's User Agent

To solve this, you can ask your technical team or hosting provider to whitelist any requests from user agent SiteGuruCrawler. All our requests are made using that user agent, so adding that to the white-list should give us access to your site.

Blocked by robots.txt settings

SiteGuru follows restrictions in your robots.txt file. If we're not allowed to crawl the site because of robots.txt instructions, we'll show this as a warning message in the site report.

If this is the case, please add the following to your robots.txt file:

User-agent: SiteGuruCrawler

Allow: /

Solution: changing the User Agent

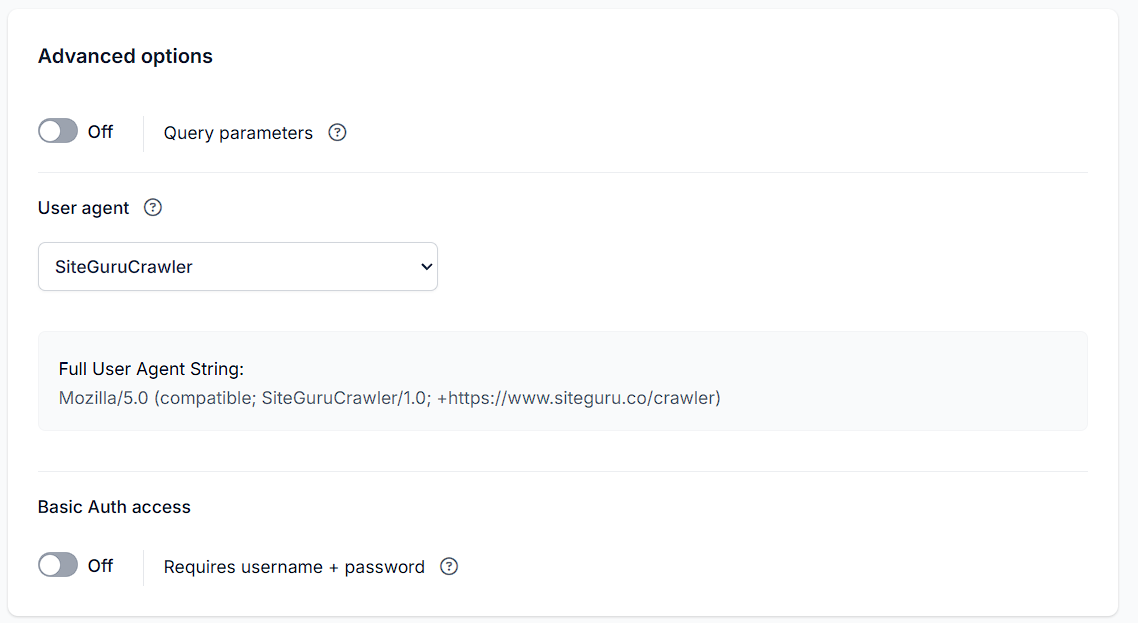

If you're not able to whitelist our user agent, you can change the user agent that we use to crawl the site. By default, we use SiteGuruCrawler. This can be changed from Site Settings > Advanced options.

Choose Googlebot or Bingbot if you ran into crawl issues before.

Whitelist SiteGuru IPs

If this doesn't work, you can try whitelisting our IPs. We crawl from the following IP addresses:

- 54.78.126.239

- 52.17.9.255

- 34.246.125.226

- 3.248.73.25

- 34.253.76.180

Country filters

Are you blocking specific countries? Our servers are based in Ireland, so make sure not to block Ireland.

Still having issues?

If you still have issues getting your website crawled, please contact us.